The HTTPS Staging Site Myth: How Bots and AI Expose Your “Hidden” E‑Commerce URLs

You have a secret project—a massive holiday promotional pricing strategy, a headless checkout redesign, or a highly anticipated exclusive product drop. And you built it on a “secret” web address that nobody outside your retail brand has. To be extra careful and adhere to modern web standards, your e‑commerce IT team adds the little security padlock to the address bar. But what if the very act of making your site “secure” was the one thing that announced its existence to the entire world?

It is one of the most common cybersecurity myths in modern e‑commerce and web development: the belief that if nobody has the link, nobody can find the website. This idea, known as “security by obscurity,” feels intuitive. After all, if you do not share staging.yournewstore.com with anyone, how could they possibly find it?

Unfortunately, this provides a dangerous false sense of safety. In practice, automated tools, AI shopping scrapers, and curious retail competitors do not need your link to discover what you are building. They have a different, surprisingly public source of information. The source of this leak is not a sophisticated data breach; it is a little‑known side effect of a feature we are all trained to trust unconditionally.

That padlock icon in your browser does mean your connection is private and secure. However, the process of getting that certificate of trust for your e‑commerce storefront is not private at all. It involves a public registration step that can inadvertently place your hidden retail projects in the spotlight, exposing your raw data to search engines and AI agents before you are ready.

What That Padlock Really Means: Your Store’s Official ID Card

We have all learned to trust the padlock icon in our browser, but what is it actually trusting? Before your browser secures your connection to process a credit card, it first asks the website for identification. This is where an SSL (Secure Sockets Layer) or TLS (Transport Layer Security) certificate comes in.

Think of it as an e‑commerce website’s official ID card. Its primary job is not to hide the site’s existence, but to prove that the storefront you are visiting is authentic—that MyCoolShop.com is really MyCoolShop.com and not an imposter trying to steal your customers’ payment information.

Of course, just as you cannot print your own driver’s license at home, a merchant cannot create its own trusted certificate. It must get one from a Certificate Authority (CA)—a recognized, highly regulated organization that acts like a DMV for the internet. These CAs rigorously verify that the retailer requesting the certificate actually owns the web address, adding a crucial layer of trust. Without this verification, anyone could spoof a checkout page, making online retail impossible.

Ultimately, the padlock’s promise is about authenticity and encryption: you have connected to the legitimate retailer, and nobody can intercept the transaction data. But to keep everyone honest, this system relies on a surprising rule—one with a side effect that can unintentionally expose your unreleased catalogs and “secret” dev environments to the public.

If you want a deeper technical refresher on how certificate management works in practice, guides such as SwissSign’s overview of certificate management best practices are a good reference point.

The Hidden Rule of Security: The Public Phonebook for Websites

This whole system of website “ID cards” sounds robust, but it relies on trusting the “ID offices” (the Certificate Authorities) to never make a mistake or get tricked by a malicious actor. What if a sophisticated attacker convinced a CA to issue a fake ID card for your brand’s checkout page?

To solve this potential crisis of trust, the internet community added a powerful, mandatory rule. Think of it like a public phonebook for websites. Every time a new “Website ID Card” (an SSL/TLS certificate) is issued for any web address anywhere in the world, a note about it must be published in this globally shared book. This is not an optional step for highly secure sites; it is effectively a hard requirement for modern browsers like Chrome and Safari to show the padlock at all.

In the real world, this system is called Certificate Transparency (CT). Certificate Transparency is a public, tamper‑evident auditing system for certificates. In a large‑scale analysis of CT logs, Splunk’s SURGe team downloaded the contents of 15 major Certificate Transparency logs and ingested roughly five billion unique TLS certificates, as described in their article “Trust Unearned? Evaluating CA Trustworthiness Across 5 Billion Certificates.”

These logs allow IT teams, security researchers, and the general public to watch for wrongly issued certificates and protect brand integrity. It is a brilliant security feature designed to build more trust in online transactions.

Here is the crucial side effect, however: this digital phonebook is completely open for everyone to read, including automated retail bots. The exact millisecond your IT team gets a certificate for blackfriday-test.yourstore.com, its existence is announced to the world.

You do not need specialist tools to see this in action. Public utilities like this free Certificate Transparency checker and subdomain finder show how easy it is to pull newly issued hostnames directly from CT logs.

How Your “Secret” Staging Site Ends Up on Google

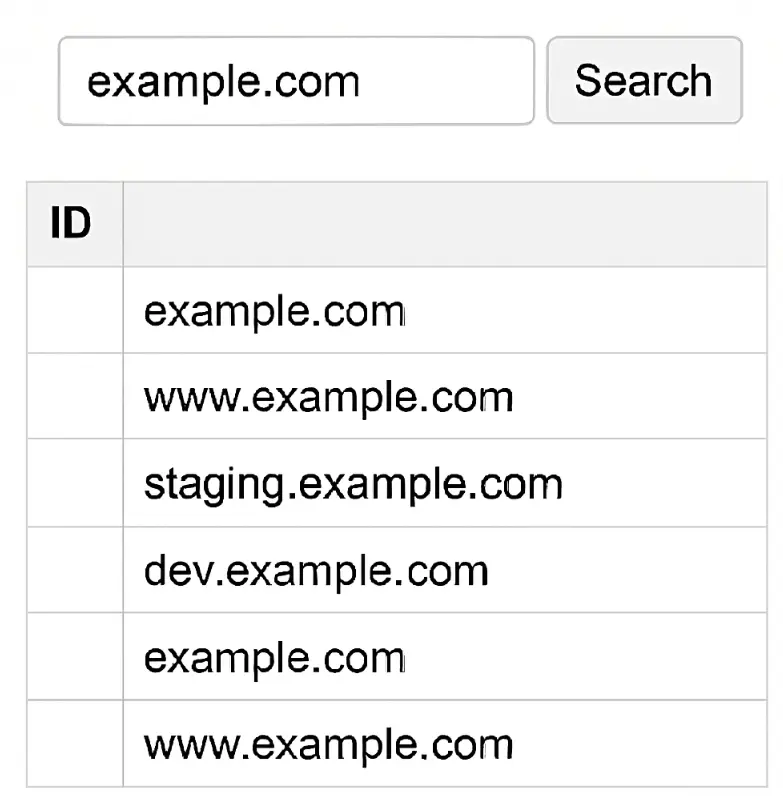

Search results table UI showing filtered domain records for “example.com”.

You might think that as long as you do not share the link to staging.yournewstore.com in a marketing email, it remains your little secret. After all, if there are no links pointing to it, how could a search engine possibly find it? This common assumption is one of the biggest myths of the web.

The internet is not just made up of shoppers clicking around on category pages; it is heavily patrolled by millions of automated bots. Many of these bots have one specific, relentless job: to constantly read the new entries in those global Certificate Transparency logs as they happen in real time.

These programs are specifically designed to spot common e‑commerce development prefixes. They look for dev., test., uat., beta., and staging. because these subdomains often point to the most interesting, unfinished, or sensitive retail projects a company is working on—like backend ERP integrations or new headless commerce architectures. A simple script searching these public logs can instantly reveal a list of all secured subdomains for a given retail brand, laying out their internal infrastructure for anyone to see.

And who runs some of the most sophisticated bots on the planet? Search engines and AI companies. Search platforms already use many non‑link signals to find fresh URLs, and Certificate Transparency logs have become a useful discovery source for new hostnames that never appear in public navigation. Because of this, the very act of securing your test site is effectively a flashing neon signal to AI shopping agents and crawlers to come take a look. Your “secret” product drop is not protected by an obscure URL; it is publicly announced, often landing in search results or AI training datasets before it is ready for its debut.

For a neutral technical overview of CT and how browsers use it, Mozilla’s Certificate Transparency documentation is a solid starting point.

The Real‑World Risks of an Exposed E‑Commerce Test Site

At this point, you might be thinking, “So what if someone finds my test site? It is just a draft. There are no real transactions happening.” While it seems harmless, an exposed staging site is more than just an embarrassing peek behind the curtain; it creates genuine, quantifiable risks for your e‑commerce operations.

Suddenly, your private digital workshop has an audience, which leads to several immediate business problems:

Competitors see your next move - They can discover a new product launch, a major rebranding effort, or your Black Friday promotional pricing matrix weeks before you have officially launched. This allows rival merchants to adjust their own marketing to steal your thunder and erase your competitive advantage.

Your reputation takes a hit with customers and AI - Potential customers may stumble upon a buggy, unfinished version of your site with broken add‑to‑cart buttons. More importantly, if an AI shopping agent crawls your staging site and ingests “dummy” pricing or a broken return policy, that inaccurate data becomes part of what the AI “knows” about your brand. Recent customer loyalty statistics from SAP Emarsys highlight just how fragile loyalty has become, with a bad experience enough to push a large share of customers to switch brands despite prior “love and trust.” When that kind of impatience is the norm, one broken staging experience surfacing in AI or search can do lasting damage.

Attackers find an easy target - This is the most critical risk. Staging sites are frequently less secure than production storefronts. They might be running older versions of software, have debugging tools left turned on, or connect to clones of your production database containing real customer PII (Personally Identifiable Information). Summaries of IBM’s 2024 research, such as this breakdown of the real cost of a data breach, put the global average cost of a data breach at around 4.88–4.9 million USD, with configuration weaknesses and misconfigurations—like exposed test systems and cloud misconfigurations—appearing as recurring root causes.

For attackers, an exposed staging server is a highly attractive, relatively soft target on the edge of your broader retail network.

The Simplest Fix: Put a Bouncer at the Front Door

Fortunately, the most direct way to protect a hidden site from Certificate Transparency leaks is also the easiest to understand. Think of it like putting a strict bouncer at the front door of your development server.

Before anyone—whether it is a human visitor, a Google crawler, or a malicious script—can even get a peek inside to see your new checkout flow or product photography, they are stopped and asked for a password. This single step prevents search engines, competitors, and curious visitors from accessing your work‑in‑progress, even if they have the exact, direct link from the public logs. It is the fastest way to turn an exposed link into a truly private workspace.

This method, technically known as HTTP Authentication (or Basic Auth), is a fundamental part of staging‑site security. When a visitor arrives, their browser does not load your website’s code. Instead, the server intercepts the request and forces the browser to show a simple, universal pop‑up box demanding a username and password. If the visitor does not have the correct credentials, the server returns a “401 Unauthorized” error and refuses to send any data.

The storefront itself remains completely hidden behind this prompt, effectively blocking automated bots and unwanted human eyes from ever seeing, scraping, or indexing your retail catalog. For most e‑commerce development projects, using HTTP authentication is the only protection you will ever need to combat the exposure caused by SSL/TLS certificates. Even simple tutorials like Netlify’s guide on restricting access to sites with passwords show how straightforward this pattern is.

Two More Ways to Keep Your Dev Site Private

While a strong HTTP password is your best defense against casual discovery and automated bots, technical teams can add more layers of privacy for even greater peace of mind and stricter compliance. Think of these next two methods not as replacements for a password, but as powerful supplements that work in different ways to protect your operational data.

Create a Digital Guest List (IP Allow‑Listing)

For ultimate exclusivity, you can create a strict digital “guest list” for your server. This technique, known as IP allow‑listing, allows you to configure your web server to only accept visitors from specific, pre‑approved internet addresses (IPs). For example, you can restrict access so that the site only loads if the visitor is connected to your corporate headquarters’ Wi‑Fi or your e‑commerce agency’s secure VPN.

If a competitor or a bot tries to visit from an address not on that approved list, the server simply drops the connection. It is as if the site does not exist for them at all. This is an extremely effective way of securing staging sites from the public web, especially when they mirror production data or systems. Guidance on IP‑based access control is often included in certificate management best practices and broader PKI/DevSecOps documentation.

Leave a Note for Search Engines (The Noindex Tag)

Another common tactic involves leaving a polite note for search engines within the site’s code. By adding a simple meta tag such as:

xml

<meta name="robots" content="noindex, nofollow">

to the header of your HTML pages, you are officially asking compliant services like Google and Bing to skip over your site and not include your test products in their search results, even if they found the URL in the certificate logs.

It is crucial to remember, however, that a noindex tag is not a security lock. It relies entirely on the honor system. It will not stop a malicious hacker who has the direct link from visiting, nor will it stop aggressive pricing scrapers that ignore robot directives. It simply manages your public visibility on legitimate search engines.

When combined with HTTP Authentication and IP allow‑listing, however, these tools create a robust, multi‑layered defense that ensures your staging data never leaks into the public domain.

From Securing Data to Building Delivery Intelligence

You once saw the HTTPS padlock icon as a symbol of absolute secrecy—a sign that your unfinished site was safe and hidden. Now, you see the full picture. You understand that while this lock secures your shoppers’ data in transit, its very creation announces your site’s existence to the world. Acknowledging this reality is the first step in taking true ownership of your retail brand’s digital footprint.

By replacing the myth of “security by obscurity” with concrete access controls like HTTP authentication, you prevent search engines and AI agents from crawling broken, unfinished versions of your e‑commerce website.

This strict control over what data is public and what remains private is foundational for modern retail. In an era where AI agents and consumers alike demand highly accurate information, allowing bad data to leak from a staging site damages your credibility. The goal is to ensure that when an AI or a shopper interacts with your brand, they only see verified, reliable operational data.

This requirement for data integrity extends far beyond your website’s code; it applies equally to your supply chain and logistics data. Fragmented carrier updates, inaccurate estimated delivery dates, and messy return policies create the same kind of “bad data” that causes AI agents to rank your competitors higher or surface the wrong expectations in AI‑powered search.

Parcel Perform was built to solve this exact problem for global enterprises. By unifying data from over 1,100 carriers into a single, highly secure AI Delivery Experience Platform, we eliminate data fragmentation across the post‑purchase journey. Our infrastructure is ISO 27001 certified, ensuring that your sensitive logistics information is handled with the same rigor you apply to your internal staging environments.

With this secure, harmonized data foundation, our platform utilizes AI Decision Intelligence to optimize your entire post‑purchase journey—powering more accurate delivery promises, proactive exception handling, and smarter customer communications. Industry leaders using these capabilities often reduce “Where Is My Order?” (WISMO) inquiries by significant double‑digit percentages and see noticeable lifts in customer retention. They do not just secure their data; they transform it into their greatest competitive advantage.

To see how standardizing and securing your logistics data can transform your customer experience, book a demo with our team at Parcel Perform.

Frequently Asked Questions

Does the HTTPS padlock make my hidden site private and hard to find?

No. HTTPS (the padlock) proves you are talking to the real site and encrypts data in transit, but it does not hide the site’s existence. Getting an SSL/TLS certificate creates a public record in Certificate Transparency logs, which can reveal your “secret” subdomains to anyone—bots, competitors, and search engines included.

What is Certificate Transparency, and why does it list my subdomain?

Certificate Transparency (CT) is a public, tamper‑evident log of SSL/TLS certificates issued globally. Browsers require certificates to be logged so mis‑issued or fraudulent certificates can be detected. The side effect is that when you secure staging.yourdomain.com, that hostname appears in public logs where anyone can see it. Mozilla’s Certificate Transparency documentation gives a clear, vendor‑neutral overview of how this works.

How do bots and search engines discover staging sites from these logs?

Automated systems continuously read CT logs and extract newly seen hostnames—especially common dev prefixes like dev., test., and staging. Search engines and security scanners use this stream to discover new pages to crawl, so the act of getting a certificate effectively announces your staging URL to the wider web, even if you never link to it. Public tools like the Certificate Transparency checker and subdomain finder make this discovery process visible.

What are the real risks if my staging site gets exposed?

Exposure can spoil launches, confuse AI shopping agents with bad data, and create security vulnerabilities. Competitors can spot upcoming product catalogs, visitors may see buggy checkout flows, and attackers often target staging sites because they are less hardened, expanding your attack surface and increasing the risk of a costly data breach. Summaries of the cost of a data breach in 2024 highlight how quickly those incidents add up.

How can I keep a dev or staging site truly private?

Put it behind a password using HTTP Authentication. This blocks both people and bots before any page code loads. For extra protection, add IP allow‑listing to only allow approved networks (such as corporate offices or VPN ranges), and use a noindex tag to discourage indexing by compliant search engines. Remember, noindex is a visibility hint, not a true security control—your real protection comes from strong access controls at the front door.

If you want to go further and understand how better post‑purchase data can cut WISMO and improve customer service, the Parcel Perform glossary entry on WISMO and insights like our guide to AI‑powered e‑commerce logistics are useful next reads.

About The Author

Parcel Perform is the leading AI Delivery Experience Platform for modern e-commerce enterprises. We help brands move beyond simple tracking to master the entire post-purchase journey—from checkout to returns. Built on the industry's most comprehensive data foundation, we integrate with over 1,100+ carriers globally to provide end-to-end logistics transparency. Today, we are pioneering AI Commerce Visibility—a new standard for the age of Generative AI. We believe that in an era where AI agents act as gatekeepers, visibility is no longer just about keywords; it’s about proving operational excellence. We empower brands to optimize their trust signals (like delivery speed and reliability) so they are recognized by AI, recommended by algorithms, and chosen by shoppers.

You might also like

AI Context Window Optimization for E-Commerce

Stop losing AI search traffic to DOM truncation. Optimize your first 4k tokens to dominate AI recommendations.

Apr 30, 2026

Parcel Perform

Why AI Agents Rank E-Commerce Operations Over Content

Stop hiding operational data in JavaScript. Learn why AI agents rank server-side trust facts over visual design.

Apr 17, 2026

Parcel Perform

The 6-Day AI Crawl: Why Your Ecommerce Product is Invisible

GPTBot crawls your site every 6 to 9 days. Discover why your new e-commerce product launches remain invisible.

Apr 17, 2026

Parcel Perform